Codex 5.3 and Claude Opus 4.6 are the big names right now. Most days, it feels like a trade-off between quality, speed, and cost. That’s why I’m testing GLM-5. It isn’t “new,” but it may be a strong value. If it performs well for less money, it’s worth a real look.

I’m not judging it on autocomplete alone. I want to see how it handles agent-style work. Can it plan a change, edit several files, and stay consistent? Can it refactor code, write tests, and follow steps without getting lost? If it can do that reliably and keep costs down, it can earn a spot in my stack.

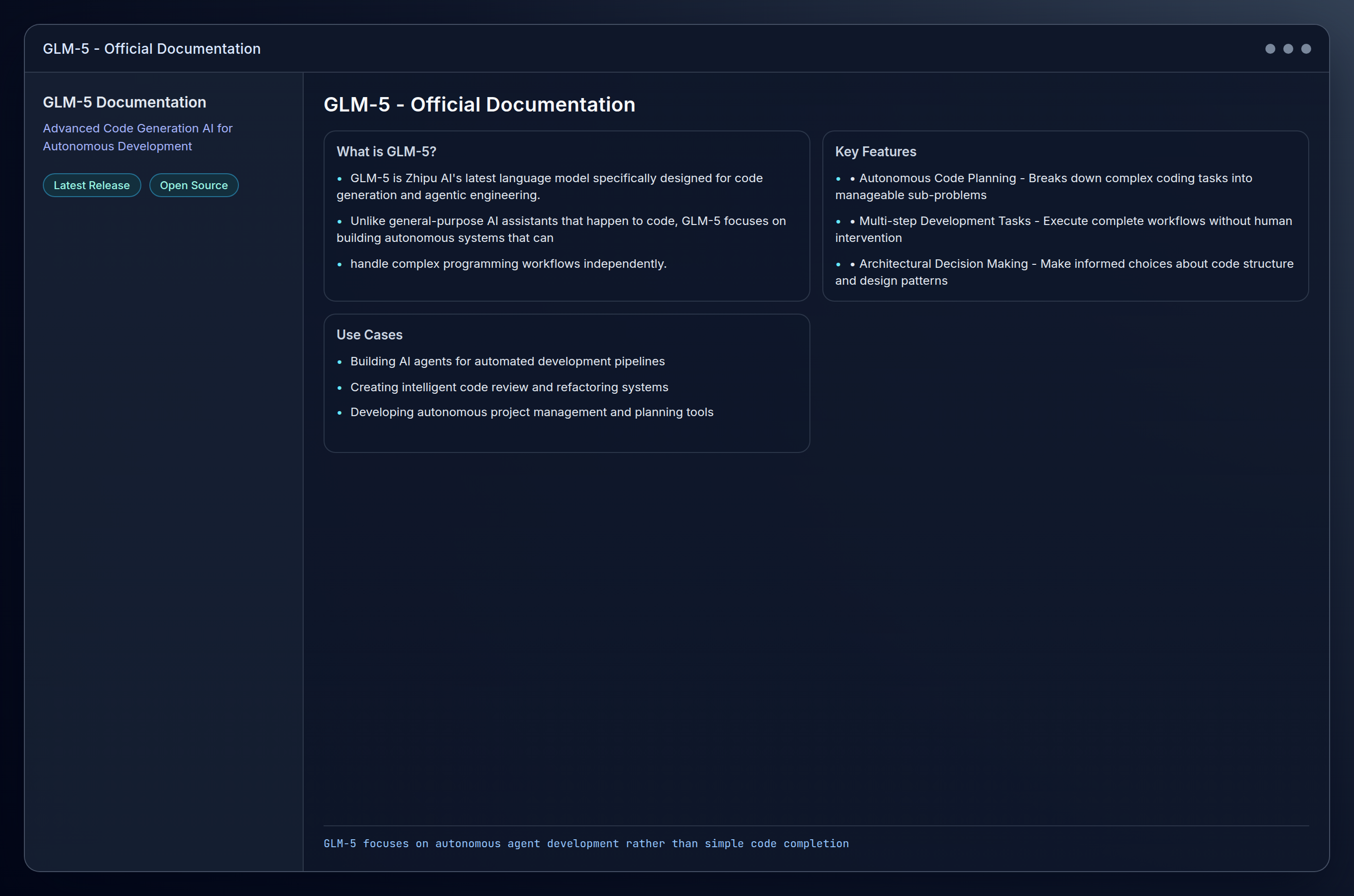

What is GLM-5?

GLM-5 is Zhipu AI’s latest language model specifically designed for code generation and agentic engineering. Unlike general-purpose AI assistants that happen to code, GLM-5 focuses on building autonomous systems that can handle complex programming workflows independently.

The positioning is interesting as they’re not trying to be everything to everyone. Instead of competing directly with Copilot on autocomplete, they’re carving out the autonomous agent space. The model is designed for creating AI agents that don’t just suggest code, but actively plan projects, execute multi-step development tasks, and make architectural decisions.

Key Features:

- Autonomous Code Planning: Breaks down complex coding tasks into manageable sub-problems

- Multi-Language Support: Handles Python, JavaScript, Java, C++, and other major languages

- Agent Architecture: Built specifically for creating persistent coding agents

- Context Management: Maintains project-wide understanding across multiple files and sessions

- API-First Design: Designed for integration into existing development workflows

Who Should Use GLM-5?

GLM-5 targets developers looking to move beyond traditional AI coding assistance toward genuine automation. After testing the initial access, I can see where this fits.

Software Engineers working on repetitive tasks like API development, test generation, or code refactoring will find GLM-5’s autonomous capabilities particularly valuable for reducing manual overhead. The model seems to excel when you can hand it a clear objective and step back.

DevOps Teams can leverage GLM-5 to build agents that monitor codebases, suggest optimizations, and even implement routine maintenance tasks without constant supervision.

AI Researchers experimenting with autonomous development workflows will appreciate GLM-5’s focus on agentic behavior rather than simple text completion. This is clearly where Zhipu AI spent their design effort.

Startup Teams with limited engineering resources might use GLM-5 to automate portions of their development pipeline, effectively scaling their coding capacity.

It may not be the best fit if:

- You’re looking for simple IDE integration like GitHub Copilot

- Your primary need is general-purpose AI assistance beyond coding

- You work primarily with legacy systems that require extensive domain knowledge

- You prefer established, battle-tested tools over newer alternatives

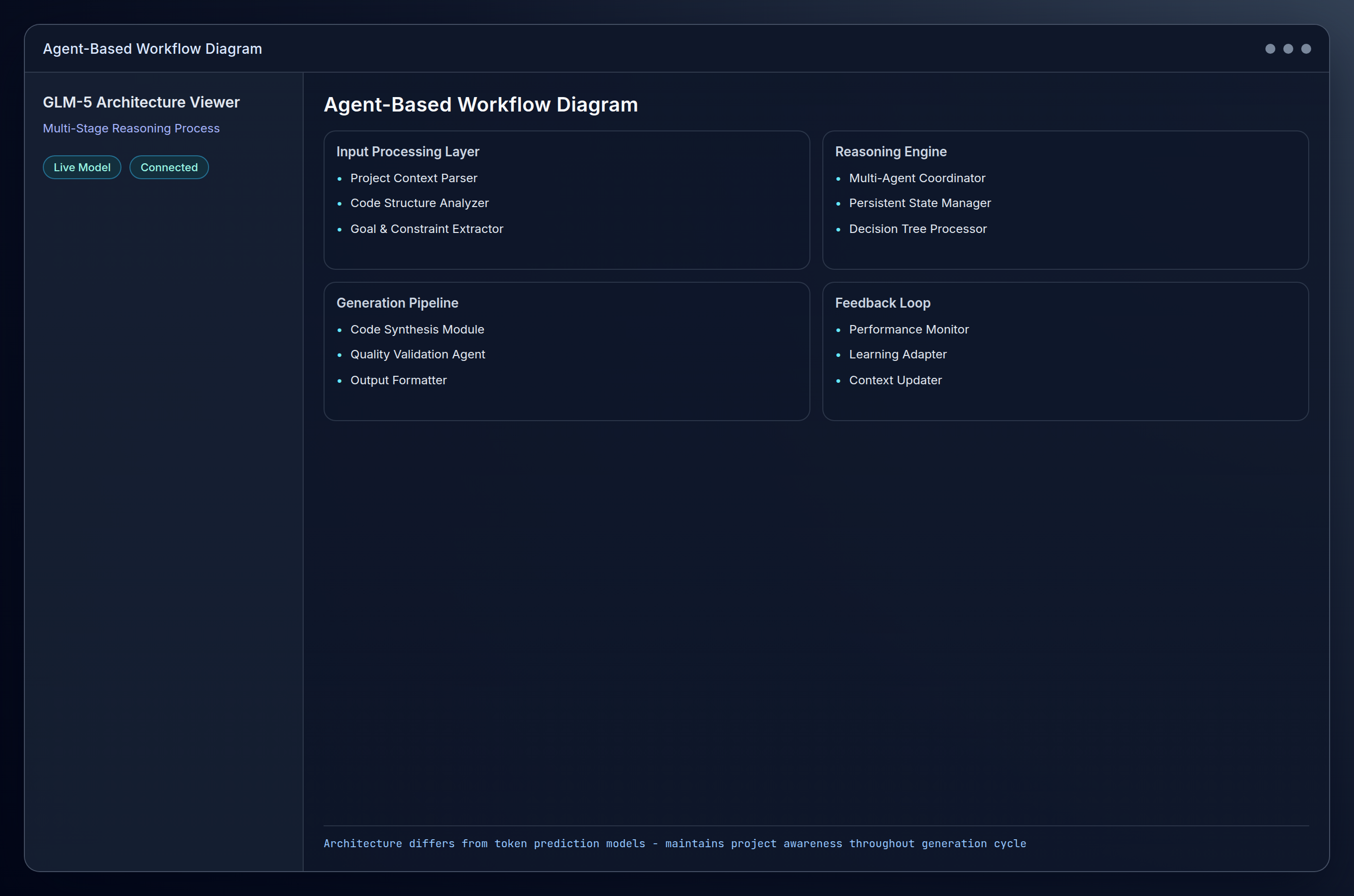

How GLM-5 Works

GLM-5 operates on a fundamentally different architecture than traditional code completion models. Instead of predicting the next token based on immediate context, it maintains a persistent understanding of project structure, goals, and constraints. This becomes apparent pretty quickly when you start using it.

Technical Foundation

The model uses a multi-stage reasoning process:

- Task Analysis: Breaks down high-level requests into specific, actionable steps

- Context Gathering: Analyzes existing codebase, dependencies, and project structure

- Solution Planning: Creates a detailed implementation plan with fallback strategies

- Execution Monitoring: Tracks progress and adjusts approach based on intermediate results

- Quality Validation: Tests generated code and suggests improvements

This approach enables GLM-5 to handle requests like “refactor this module for better performance” or “add comprehensive test coverage” as complete workflows rather than individual code snippets. When it works, it feels less like using a tool and more like delegating to a junior developer who actually follows through.

Key Features

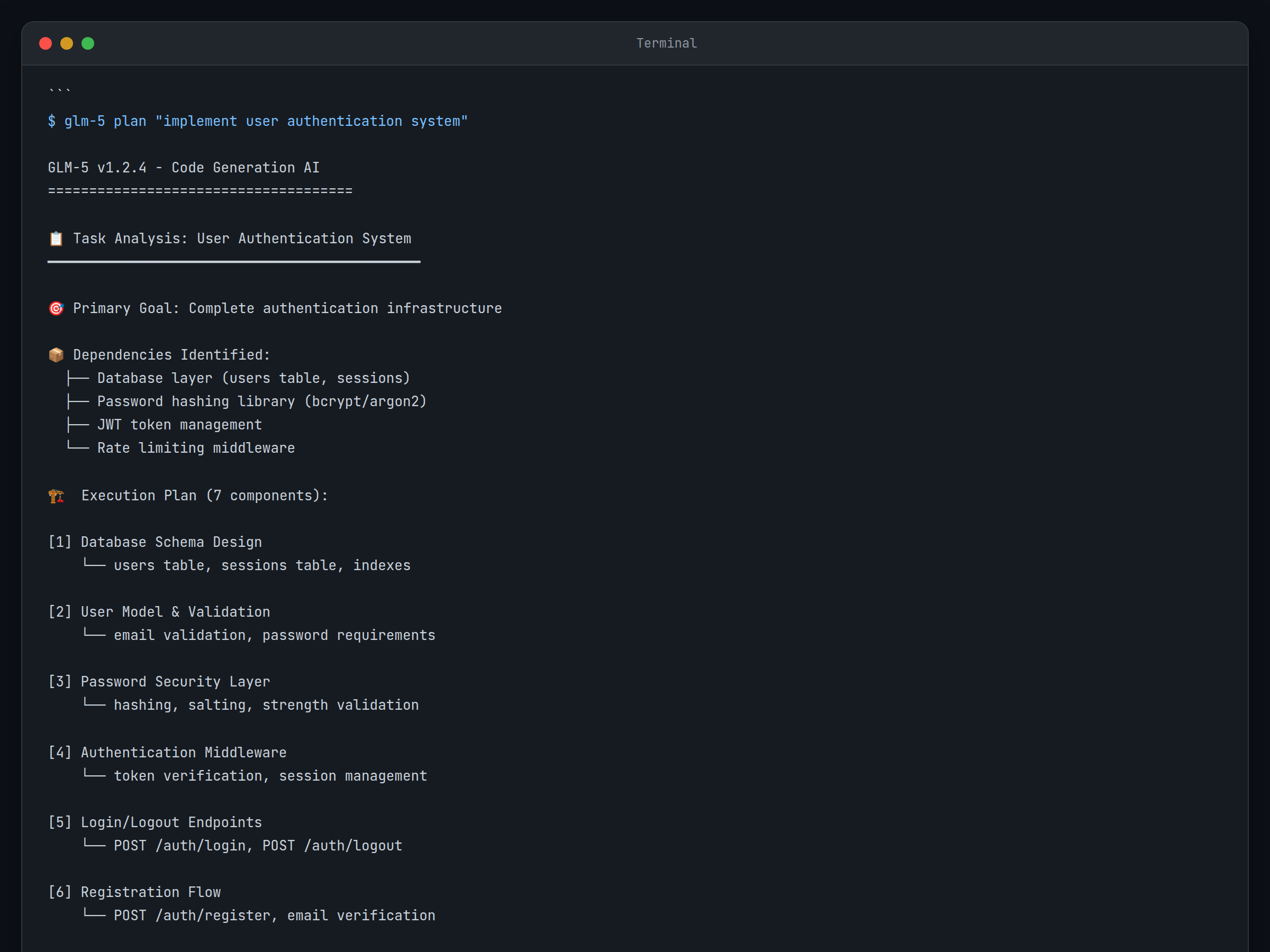

Autonomous Task Planning

GLM-5’s standout feature is its ability to decompose complex coding requests into executable plans. When you ask it to “implement user authentication,” it doesn’t just generate a login function, it plans the entire authentication system, including database schemas, middleware, error handling, and security considerations.

The first time I watched it break down a complex request, I was genuinely surprised by how thorough the planning phase was. It actually thinks through dependencies and edge cases before writing any code.

Multi-File Context Awareness

Unlike models that work on individual files, GLM-5 maintains awareness across your entire project. It understands how changes in one module affect others, suggests appropriate import statements, and ensures consistency in naming conventions and architectural patterns.

This is where you really notice the difference from standard autocomplete tools. It’s keeping track of your project structure and making decisions based on the broader context, not just what’s in the current file.

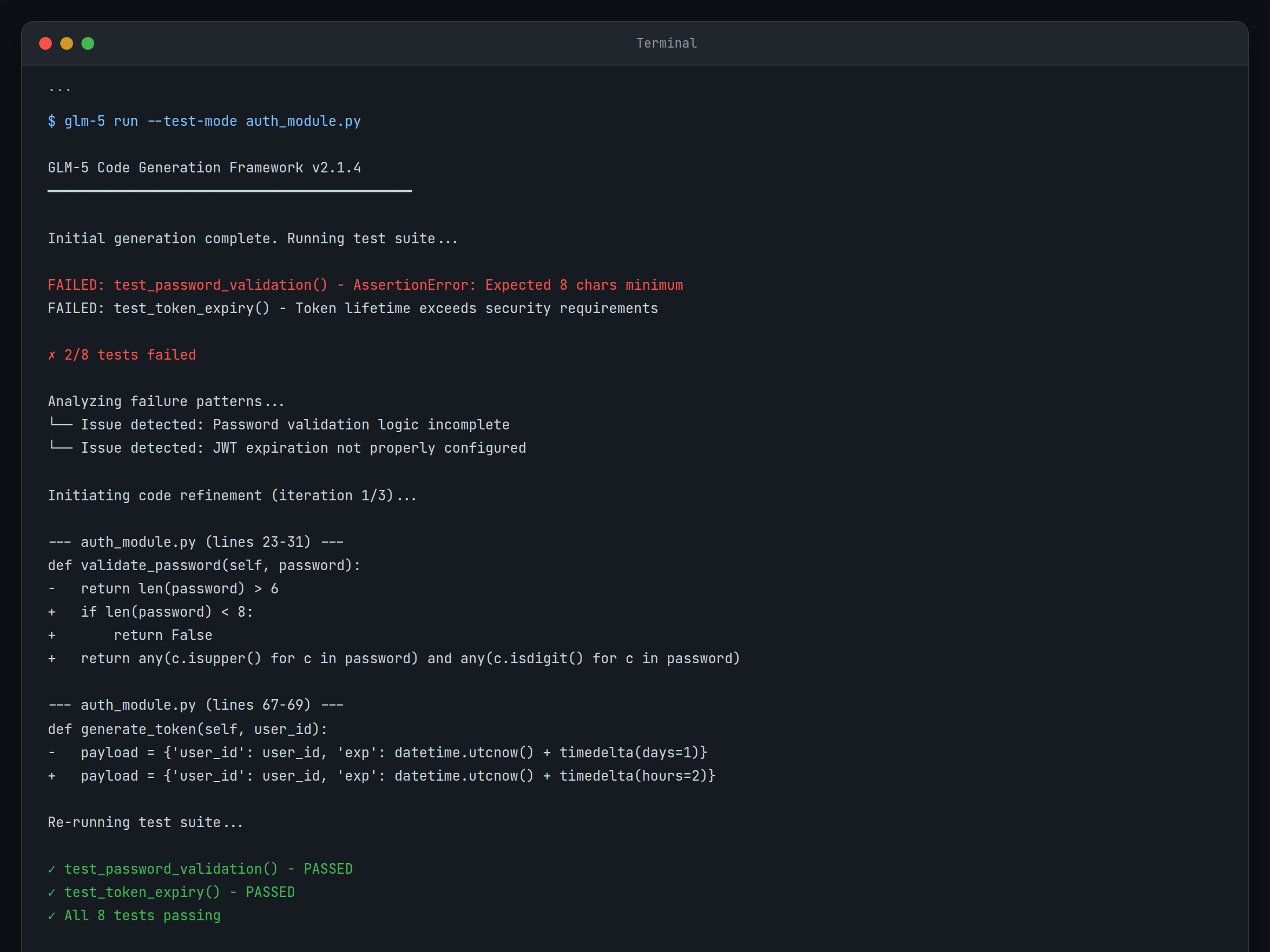

Iterative Code Improvement

The model doesn’t just generate code and move on. It can iterate on its own output. If initial code doesn’t meet performance requirements or fails tests, GLM-5 analyzes the issues and refines its approach automatically.

Language-Agnostic Architecture Understanding

GLM-5 grasps architectural patterns that transcend specific programming languages. It can suggest microservices patterns in Python, implement them in Node.js, and create corresponding infrastructure configurations, all while maintaining consistent design principles.

Real-Time Collaboration

The model can work alongside human developers, taking on specific subtasks while maintaining awareness of human-written code. This collaborative approach prevents the common AI issue of generating code that conflicts with existing implementations.

Production-Ready Code Generation

GLM-5 emphasizes generating code that’s ready for production environments, including proper error handling, logging, documentation, and security considerations. This addresses one of my biggest frustrations with other AI coding tools – the amount of cleanup required before anything can ship.

Getting Started

The setup process was smoother than expected for a relatively new model. Here’s the path I took:

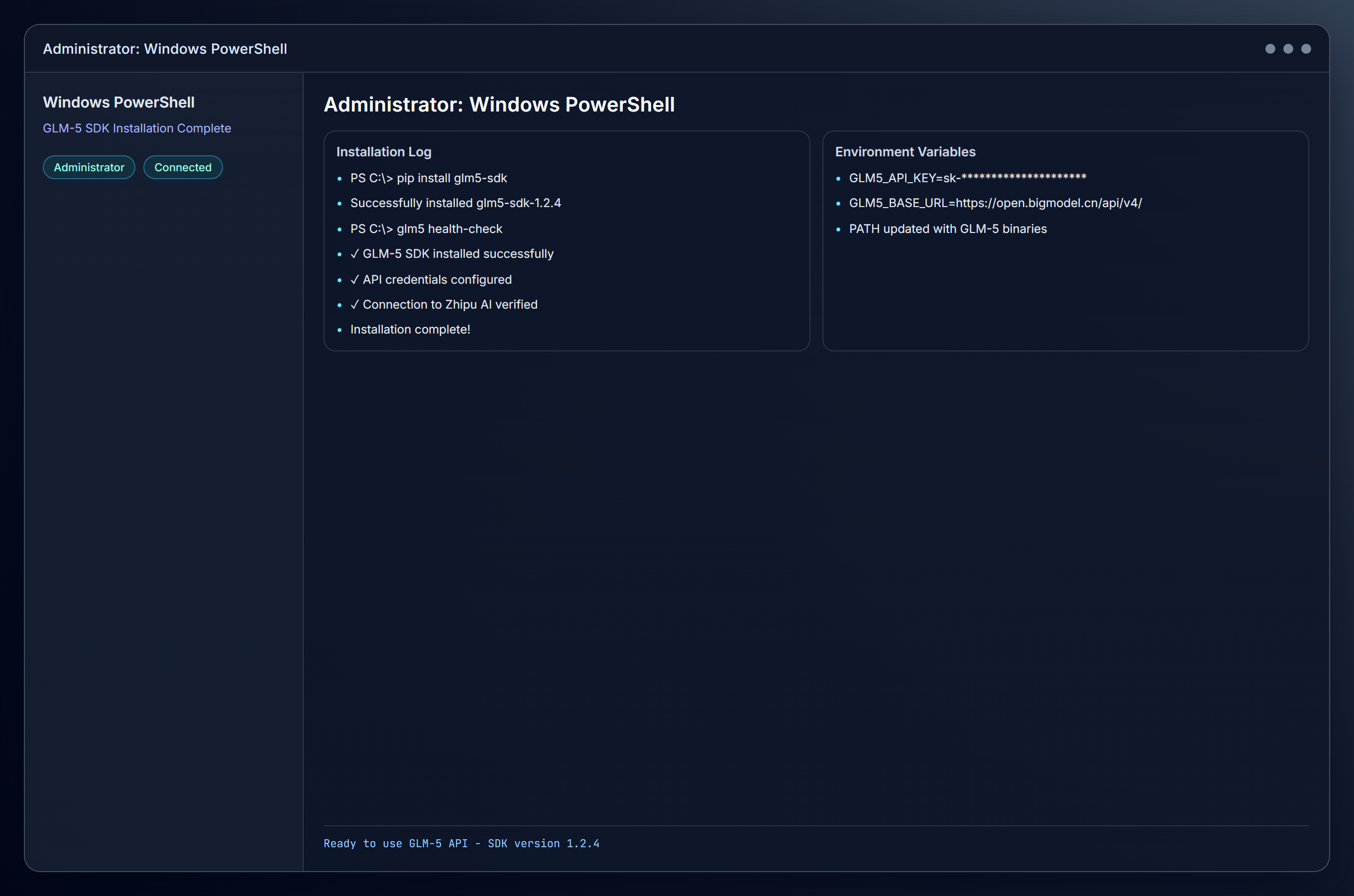

Windows Setup

- Install Python 3.10+ from the Microsoft Store or python.org

- Open PowerShell as Administrator

- Install the GLM-5 SDK:

pip install glm5-sdk - Set up your API credentials in your environment variables

- Verify installation by running the GLM-5 health check command

The installation was straightforward, though you’ll need API access from Zhipu AI first. That part took a few days for approval.

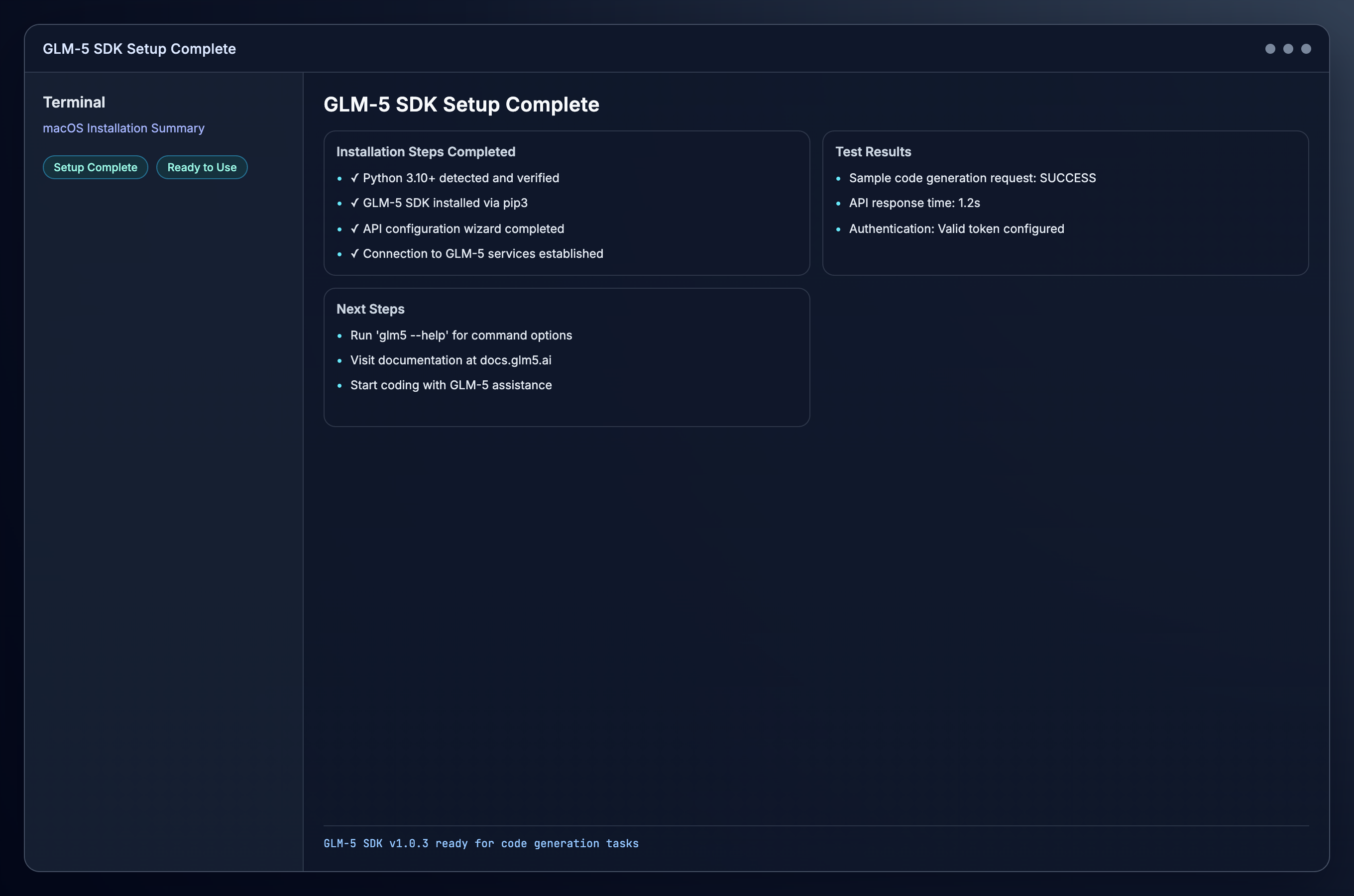

macOS Setup

- Install Python using Homebrew:

brew install python@3.10 - Open Terminal

- Install the GLM-5 SDK:

pip3 install glm5-sdk - Configure API access through the GLM-5 configuration wizard

- Test your setup with a simple code generation request

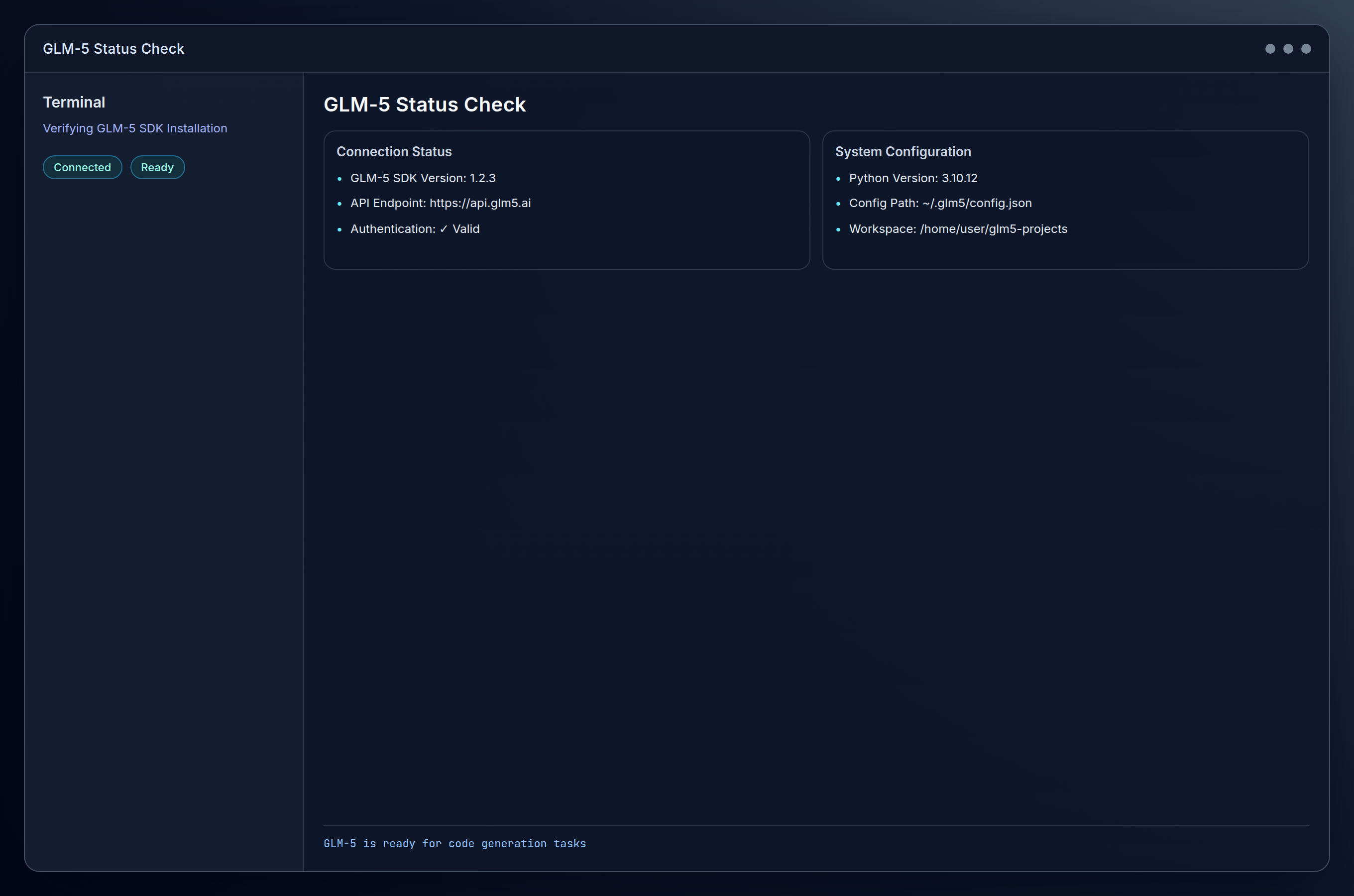

Linux Setup

- Update package manager:

sudo apt update(Ubuntu/Debian) or equivalent - Install Python 3.10+:

sudo apt install python3.10 python3-pip - Install GLM-5 SDK:

pip3 install glm5-sdk - Initialize configuration: Run

glm5 initto set up your workspace - Verify connectivity with

glm5 status

For detailed API documentation and advanced configuration options, the official GLM-5 documentation provides comprehensive guides for each platform.

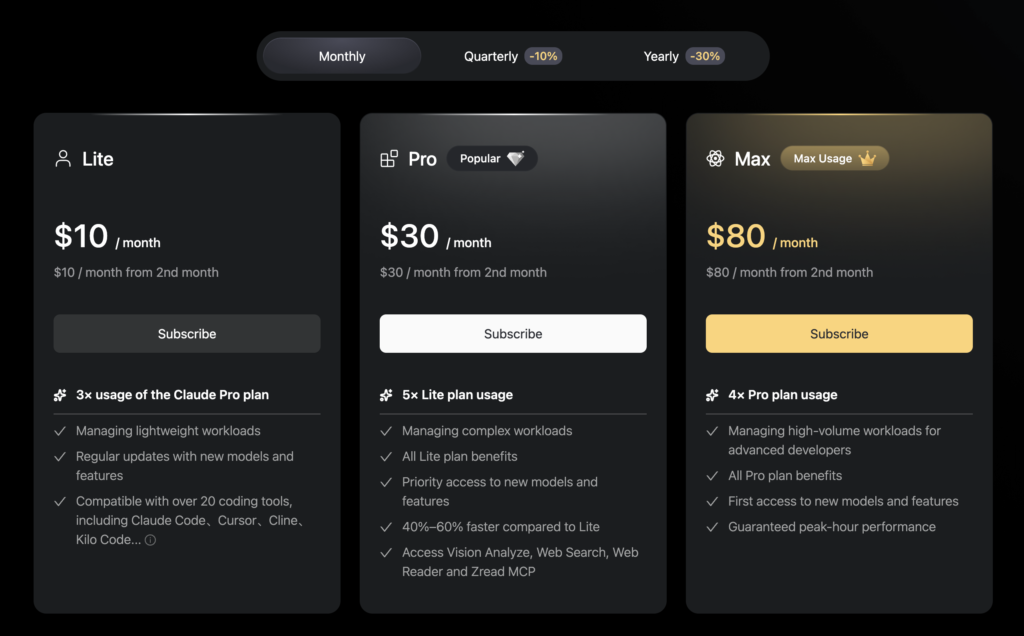

Pricing and Availability

Pricing looks like it’s been finalized as this is quite new. Z.ai lists the GLM Coding Plan tiers (Lite, Pro, Max) on the subscribe page, including what looks like an intro discount and the normal renewal rate. The entry tier works out to about $9/month (often billed quarterly, $7/month for annual), with higher tiers priced for heavier use, and the docs line that up with clear usage limits.

These numbers will probably change. Z.ai’s own docs note that pricing and quotas changed around Feb 12, and that existing subscribers keep their earlier price/limits for the rest of their current cycle (and for auto-renew terms set before that date). So before you commit to production use, make sure to check this page and possibly lock in a good price now.

Alternatives to Consider

If GLM-5’s current availability limitations don’t work for your timeline, these established alternatives offer similar capabilities:

- GitHub Copilot: More mature IDE integration and broader language support, though less focused on autonomous agent development

- Open AI Codex: Strong general coding abilities with the advantage of immediate availability, but requires more manual orchestration for complex workflows

- Claude: Excellent at understanding large codebases and architectural decisions, though not specifically designed for agentic engineering

The Bottom Line

After spending time with GLM-5, I can see what Zhipu AI is building toward. The autonomous agent approach feels like a genuine step forward from traditional AI coding assistance. When it works well, particularly for repetitive development tasks or complex refactoring projects. it’s competitive with Codex and especially Claude (which is the most expensive).

The model’s emphasis on autonomous task planning and multi-file awareness delivers on its core promise. I found myself delegating entire subsystems rather than just getting help with individual functions. That’s a meaningful difference for certain types of development work.

Pros:

- Purpose-built for autonomous coding workflows that actually work

- Strong multi-file context awareness

- Focus on production-ready code generation

- Innovative approach to task planning and execution

Cons:

- Limited availability and pricing recently announced

- Newer player without extensive real-world testing

- May require significant integration work

- Documentation and community support still developing

GLM-5 earns a place in the toolkit for teams specifically interested in autonomous coding agents. It’s not ready to replace your primary AI coding assistant, but for specialized workflows, especially around automation and agent development, it offers capabilities that current tools don’t match. Worth watching as it moves toward broader availability.