RAID controller failures on Dell PowerEdge servers can bring your entire infrastructure to a halt. With Dell ending support for R620/R720 series in 2026, you’re facing critical decisions about aging hardware.

This guide covers emergency diagnostics to firmware recovery. We’ll help you minimize downtime whether you’re managing legacy systems or planning migrations.

Quick Diagnosis Steps

Before diving into specific issues, run through these basics. They resolve most RAID controller problems:

- Check iDRAC alerts: Log into your server’s iDRAC and navigate to System > Alerts to see current hardware status

- Verify physical connections: Ensure RAID controller cards are properly seated and cables are secure

- Review system logs: Check Lifecycle Controller Log and System Event Log for recent errors

- Confirm power status: RAID controllers require stable power — check PSU health and redundancy

- Test with known-good drives: Swap in working drives to isolate controller vs. disk issues

Common RAID Controller Issues

RAID Controller Not Detected During Boot

Symptoms:

- Server boots but shows no RAID controller during POST

- Ctrl+R prompt doesn’t appear during boot sequence

- Operating system can’t see any storage devices

- iDRAC shows controller as “Not Present” or “Unknown”

Why it happens: Physical hardware failure, firmware corruption, or improper seating of the RAID controller card.

This is one of those gut-punch moments. Your server boots fine but suddenly has no storage. Don’t panic, most of the time it’s just a loose connection.

Fix:

- Power down the server completely and disconnect power cables

- Remove the server chassis cover and locate the RAID controller card

- Carefully reseat the controller card in its PCIe slot – ensure it clicks firmly into place

- Check that any auxiliary power connectors are properly attached

- Power on and watch for the RAID controller to appear in POST messages

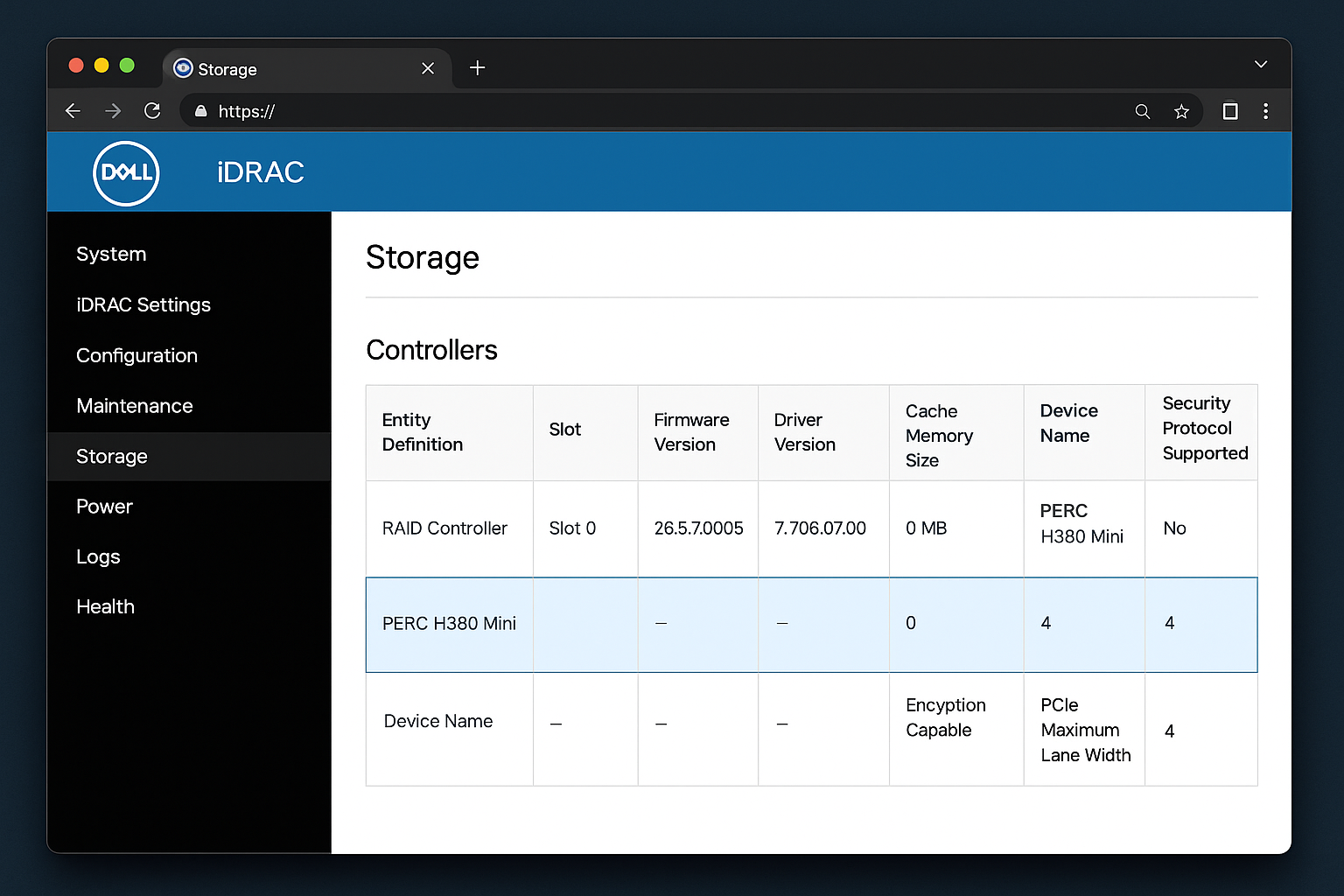

# If the controller appears, verify detection in iDRAC

# Navigate to: Configuration > Storage > Physical Disks

# You should see your RAID controller listed with model number

If reseating doesn’t work, the controller card itself may have failed. For end-of-life systems like R620/R720, sourcing replacement controllers becomes critical. Contact Dell support immediately if under warranty. Source compatible third-party controllers for out-of-warranty systems.

Verification: Boot to the RAID controller BIOS (usually Ctrl+R during POST) and confirm you can access the configuration utility.

Virtual Disk Shows Degraded Status

Symptoms:

- RAID array shows “Degraded” status in iDRAC or OpenManage

- System performance is slower than normal

- Orange warning lights on server front panel

- Email alerts about RAID array health

Why it happens: One or more drives in the RAID array have failed. Or there are communication issues between the controller and drives.

A degraded array is your server’s way of saying “I’m injured but still fighting.” You have time to fix this, but not much.

Fix:

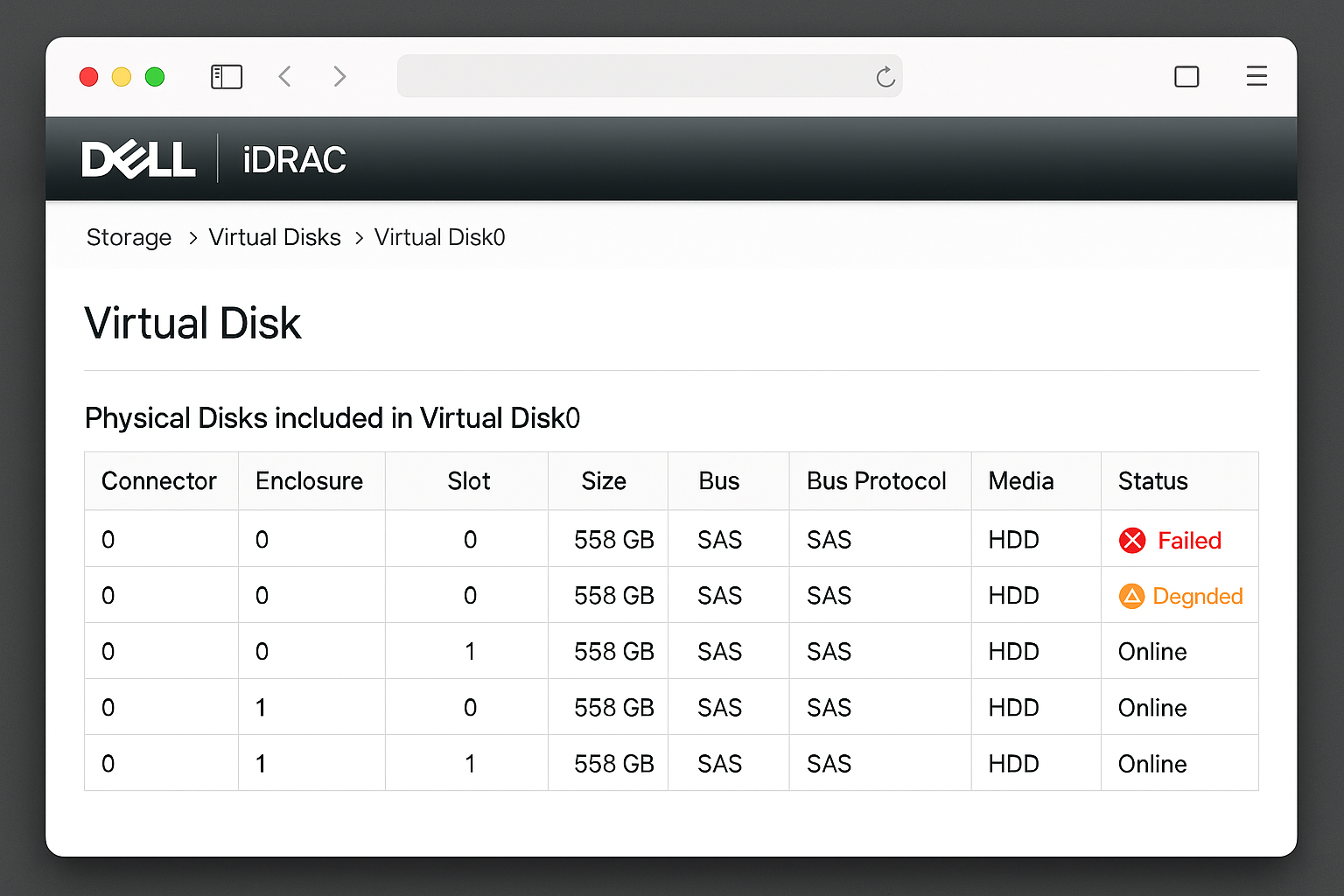

- Access iDRAC and go to Configuration > Storage > Virtual Disks

- Identify which virtual disk is degraded and note the RAID level

- Click on the degraded virtual disk to see member drive status

- Locate any drives showing “Failed” or “Missing” status

- If a drive shows as failed, identify its physical location using the drive bay mapping

- Replace the failed drive with an identical or compatible model

- The RAID rebuild should start automatically – monitor progress in iDRAC

For RAID 5 or RAID 6 arrays, you can continue operating with one failed drive. But replace it quickly. RAID 1 arrays can tolerate one failure. RAID 0 arrays will be completely inaccessible with any drive failure.

Verification: Check that the virtual disk status returns to “Optimal” after the rebuild completes. This can take several hours for large drives.

Firmware Update Failure or Corruption

Symptoms:

- RAID controller firmware update process fails partway through

- Controller appears with incorrect firmware version or “Unknown” status

- Server hangs during boot at RAID controller initialization

- iDRAC shows firmware version as “N/A” or displays error codes

Why it happens: Power interruption during firmware update, incompatible firmware version, or controller hardware failure.

Firmware updates gone wrong are particularly frustrating. They often happen during planned maintenance windows. The good news is that Dell’s Lifecycle Controller is pretty good at recovery.

Fix:

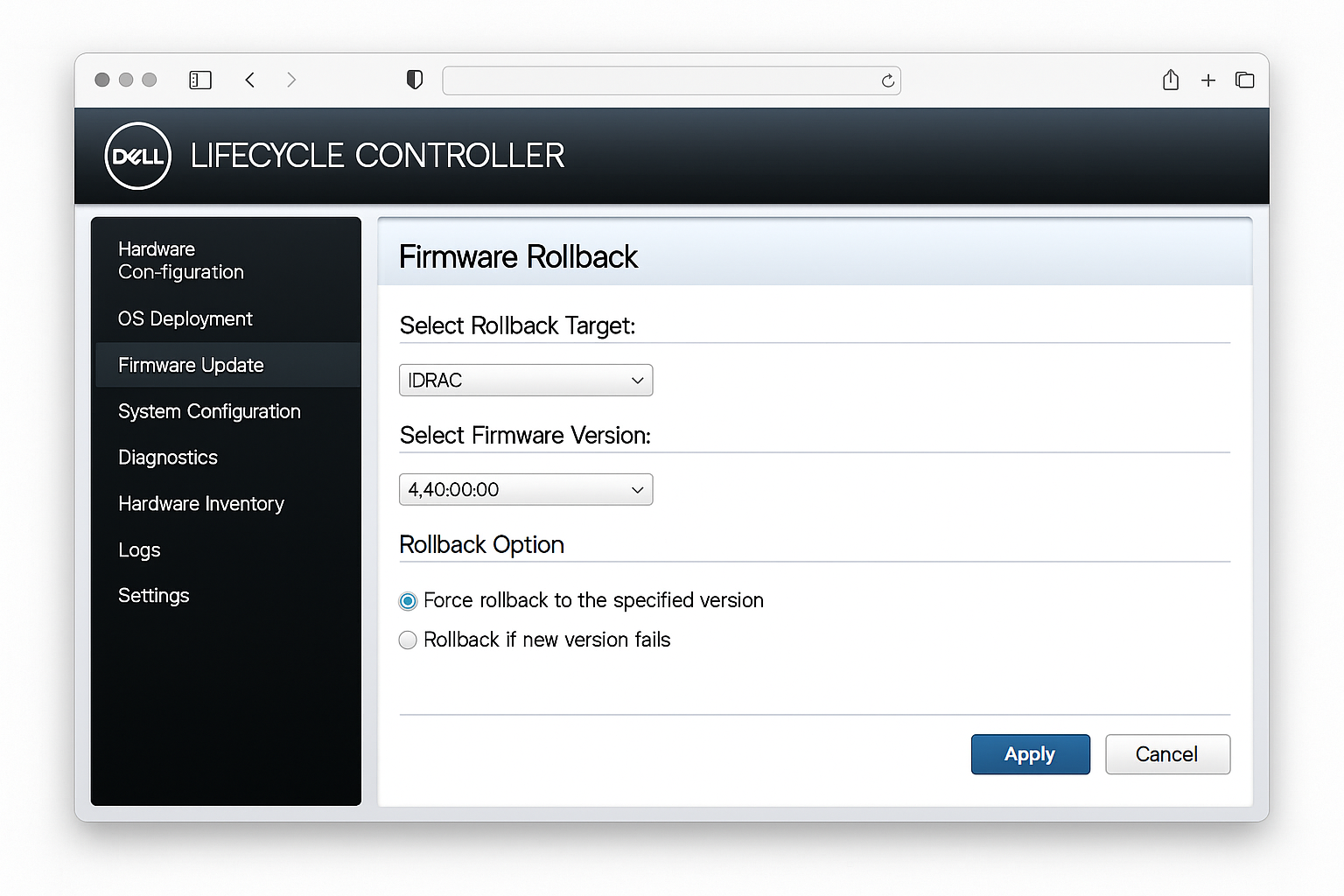

- Boot the server to Dell Lifecycle Controller by pressing F10 during POST

- Navigate to Firmware Update > Launch Firmware Update

- Select Local Drive if you have firmware files on USB, or Network Share for remote files

- If the controller appears in the firmware list, attempt a firmware rollback to the previous version

- For completely unresponsive controllers, try the emergency firmware recovery procedure:

– Power off the server completely – Remove and reseat the RAID controller card – Boot to Lifecycle Controller and attempt firmware recovery mode

# For 2026 end-of-life systems, download firmware from Dell's legacy support site

# Example URLs for common controllers:

# H710: https://www.dell.com/support/home/product-support/product/poweredge-r720/drivers

# H755: https://www.dell.com/support/home/product-support/product/poweredge-r750/driversCritical for 2026: Dell’s firmware support for R620/R720 series ends this year. Download and archive all firmware versions now while they’re still available.

Verification: Confirm the firmware version matches your target version. Check both iDRAC storage status and the RAID controller BIOS.

Performance Degradation and Slow I/O

Symptoms:

- Database queries taking much longer than normal

- File transfers to/from the server are unusually slow

- High I/O wait times shown in system monitoring

- Applications timing out on storage operations

Why it happens: Incorrect cache settings, failing drives that haven’t been detected yet, thermal throttling, or suboptimal RAID configuration.

Performance issues are sneaky. They creep up slowly until someone notices the database is taking forever to respond. The usual culprit is cache configuration.

Fix:

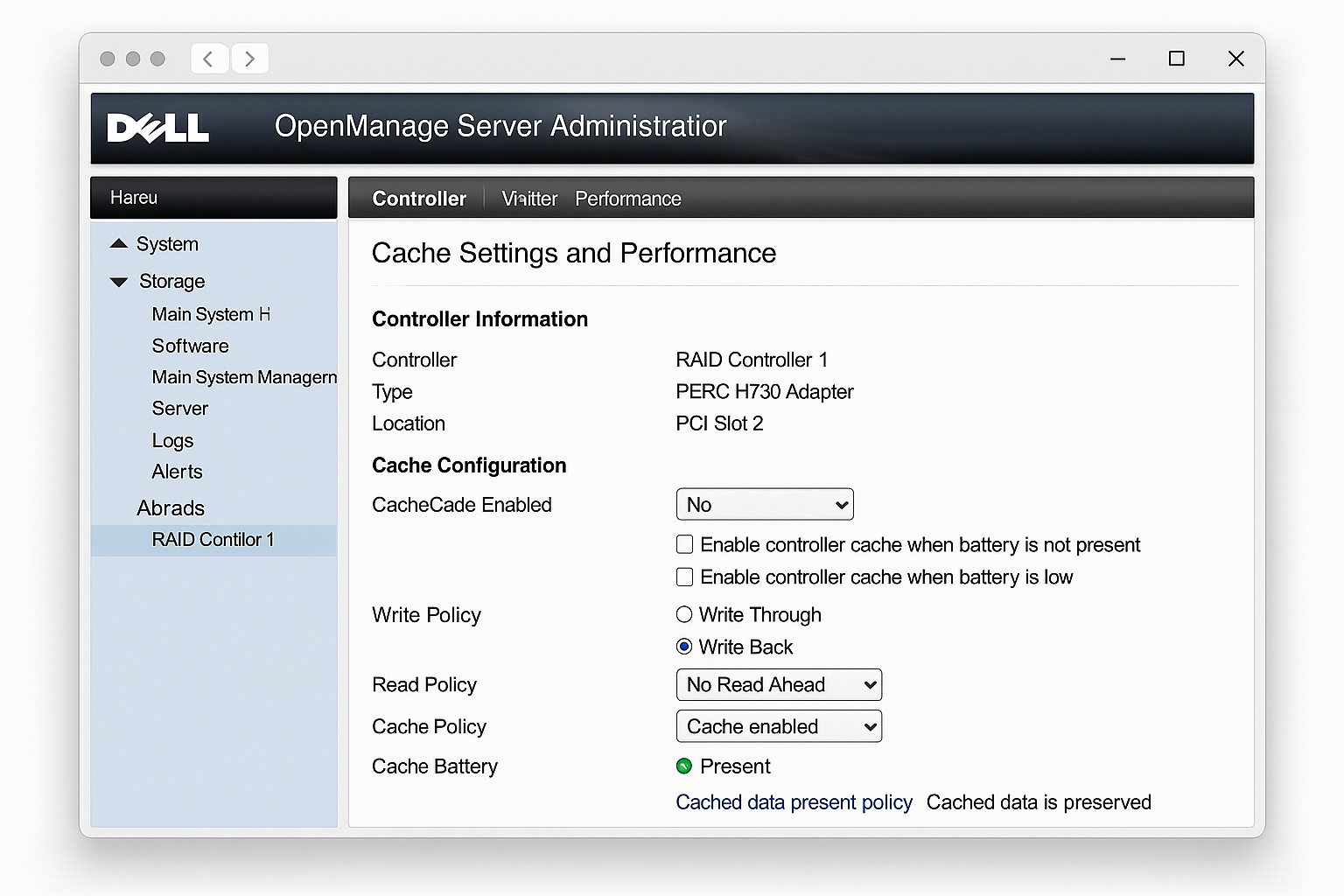

- Check RAID controller cache settings in iDRAC under Storage > Controllers

- Verify Write Policy is set to Write Back (not Write Through) for performance arrays

- Ensure Read Policy is set to Read Ahead for sequential workloads

- Check drive health metrics for early warning signs:

– Navigate to Storage > Physical Disks – Review Predictive Failure Analysis status for each drive – Look for drives with high error counts or reallocated sectors

- Monitor controller temperature and ensure proper airflow:

# Check thermal status via iDRAC RACADM (if available)

racadm get System.ThermalSettings

racadm getsensorinfo- For write-heavy workloads, consider enabling Force Write Back if you have battery backup or UPS protection

Verification: Run I/O benchmarks before and after changes to measure improvement. Tools like fio or dd can provide baseline metrics.

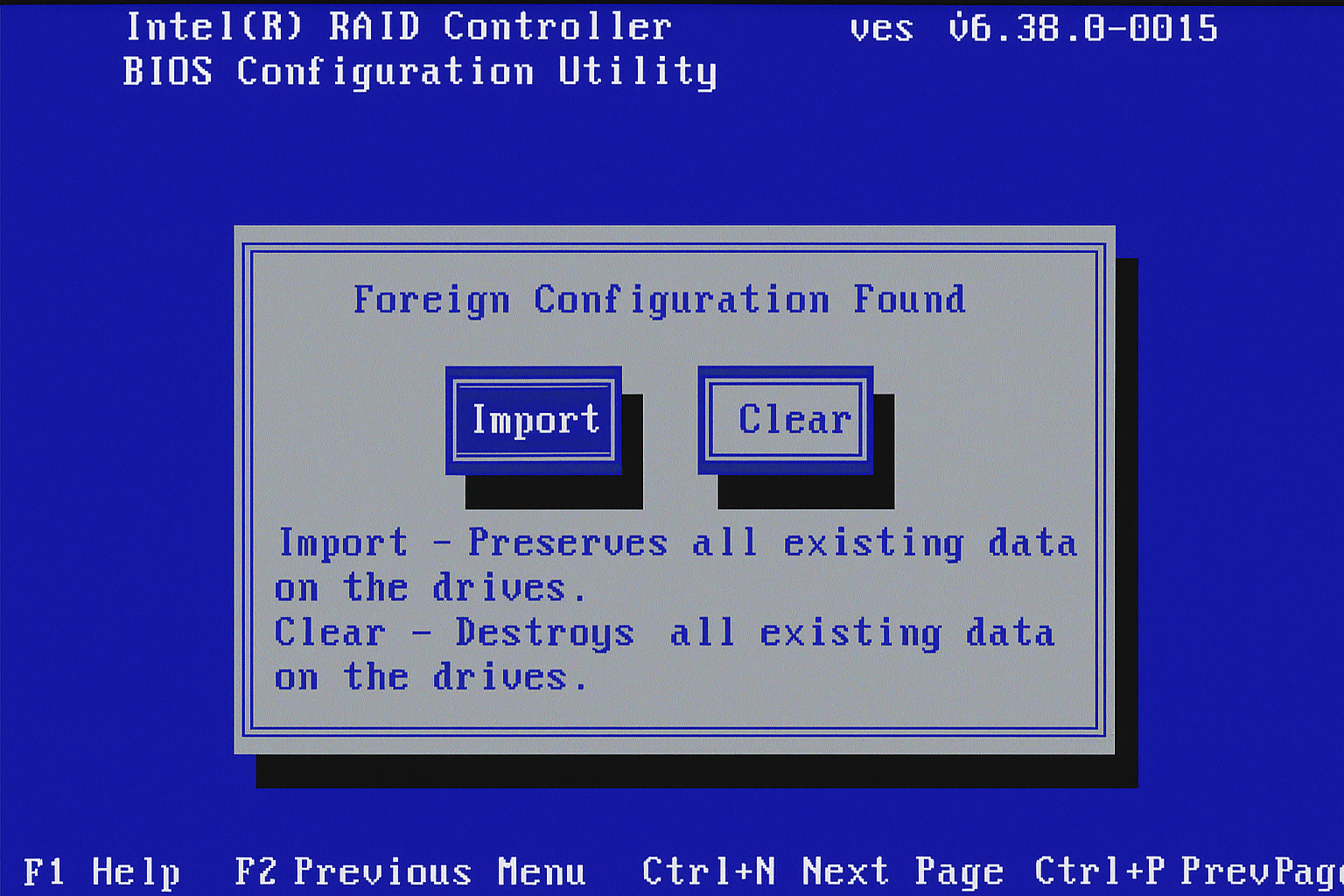

Foreign Configuration Detected

Symptoms:

- Server boots but shows “Foreign configuration detected” message

- Existing RAID arrays are not accessible

- iDRAC shows drives as “Foreign” status

- Data that should be available is not visible to the operating system

Why it happens: Drives were moved from another system, RAID controller was replaced, or previous controller failure left configuration metadata on the drives.

This message appears when your RAID controller finds drives with existing configuration data. It’s asking whether you want to keep that data or start fresh.

Fix:

CRITICAL: This decision affects data availability. Choose carefully based on your backup status.

- Access the RAID controller BIOS during boot (Ctrl+R)

- Navigate to Foreign Configuration menu

- You have two options:

Option A: Import Foreign Configuration (preserves data)

- Select Import to bring in the existing RAID configuration

- Use this if the drives contain data you need to recover

- Verify the imported configuration matches your expectations

Option B: Clear Foreign Configuration (destroys data)

- Select Clear to remove existing configuration and start fresh

- Use this only if you have complete backups or the data is not needed

- This allows you to create new RAID arrays with these drives

- After making your choice, exit the RAID BIOS and allow the system to boot

- Verify that your virtual disks appear correctly in iDRAC storage management

Verification: Check that all expected RAID arrays are present and accessible to the operating system.

Error Messages Reference

| Error Message | Meaning | Immediate Action |

|---|---|---|

| “RAID controller battery failed” | Battery backup unit needs replacement | Replace controller battery; disable write-back cache until replaced |

| “Virtual disk degraded” | One or more drives failed in array | Identify failed drives; replace immediately |

| “Foreign configuration detected” | Drives have existing RAID metadata | Choose import (keep data) or clear (lose data) |

| “Controller not responding” | Firmware hang or hardware failure | Power cycle; attempt firmware recovery |

| “Patrol read failed” | Drive surface scan found errors | Check drive health; consider replacement |

| “Consistency check failed” | RAID parity errors detected | Run manual consistency check; replace suspect drives |

Platform-Specific Issues

iDRAC Integration Problems

Issue: RAID controller not visible in iDRAC web interface

Solution:

- Verify iDRAC firmware is compatible with your RAID controller generation

- Reset iDRAC to defaults: iDRAC Settings > Network > Factory Defaults

- Update iDRAC firmware before attempting RAID controller updates

iDRAC and RAID controller compatibility can be finicky, especially with mixed generations. When in doubt, update iDRAC first.

Issue: Remote firmware updates failing through iDRAC

Solution:

- Use local Lifecycle Controller instead of remote iDRAC updates

- Ensure network connectivity is stable during update process

- For 2026 mixed environments, update iDRAC first, then RAID controller firmware

OpenManage Console Issues

Issue: OpenManage can’t communicate with RAID controller

Solution:

- Verify OpenManage Server Administrator version compatibility

- Restart the Dell OpenManage Server Administrator service

- For Windows: Check that Dell OpenManage Server Administrator Storage Management Service is running

# Linux OpenManage service restart

sudo systemctl restart dsm_sa_datamgrd

sudo systemctl restart dsm_sa_eventmgrd

# Windows PowerShell service restart

Restart-Service "Dell OpenManage Server Administrator"Configuration Best Practices

Cache Settings for Different Workloads

Database Servers (OLTP):

Write Policy: Write Back (with battery backup)

Read Policy: No Read Ahead

Cache I/O: Direct I/OFile Servers:

Write Policy: Write Back

Read Policy: Read Ahead

Cache I/O: Cached I/OBackup/Archive Systems:

Write Policy: Write Through (if no battery backup)

Read Policy: Read Ahead

Cache I/O: Cached I/OHot Spare Configuration

Always configure global hot spares for critical systems:

- Reserve one drive per 6-8 drives in your RAID arrays

- Use drives of equal or larger capacity than your largest array member

- Configure automatic rebuild policies to minimize intervention

Hot spares are cheap insurance. They kick in automatically when a drive fails. This starts the rebuild process without waiting for you to notice the problem.

Getting Help and Support Resources

Log Locations and Debug Information

iDRAC Logs:

- System Event Log: Hardware events and errors

- Lifecycle Controller Log: Firmware updates and configuration changes

- Storage Log: RAID-specific events and drive status changes

Export logs for support cases:

- Navigate to Maintenance > Diagnostics > Export Logs

- Select Hardware Logs and Storage Logs

- Download the generated zip file for Dell support

Dell Support Escalation

For systems under warranty:

- Use Dell’s automated support at support.dell.com

- Have your Service Tag ready (found on server chassis or in iDRAC)

- Reference specific error codes from system logs

For end-of-life systems (2026 considerations):

- Dell ProSupport ends for R620/R720 series in Q4 2026

- Consider third-party support contracts for extended lifecycle management

- Document all configurations before support ends

Community Resources

- Dell TechDirect: Official Dell community forum for IT professionals

- r/sysadmin: Reddit community with extensive Dell PowerEdge discussions

- Spiceworks: IT professional network with Dell hardware troubleshooting guides

Prevention and Maintenance

Proactive Monitoring Setup

- Configure SNMP monitoring in iDRAC for automated alerting

- Set up email notifications for RAID controller events

- Schedule monthly consistency checks during maintenance windows

- Monitor drive health metrics weekly for early failure detection

The best RAID problems are the ones you catch before they become emergencies. Proper monitoring catches degraded drives before they take down your arrays.

2026 Migration Planning

Assessment Framework:

- Inventory all RAID controllers by generation and support status

- Calculate replacement costs vs. extended support contracts

- Plan staged migrations to minimize business disruption

- Document all RAID configurations before hardware changes

Budget Planning:

- New PowerEdge servers with current RAID controllers: $8,000-$15,000+ per server

- Third-party RAID controller replacements: $500-$2,000 per controller

- Extended support contracts: 20-40% of original hardware cost annually

Wrapping Up

You now have the tools for diagnosing and resolving Dell PowerEdge RAID controller issues. Act quickly on degraded arrays. Plan ahead for the 2026 support transitions.

RAID controller failures will happen, it’s not a matter of if, but when. Having these procedures ready means the difference between a planned maintenance window and an emergency outage.

| Issue Type | Immediate Action | Long-term Solution |

|---|---|---|

| Hardware failure | Replace controller/drives | Plan hardware refresh |

| Firmware corruption | Lifecycle Controller recovery | Archive firmware versions |

| Performance issues | Optimize cache settings | Monitor proactively |

| EOL systems | Document configurations | Budget for migrations |